At Bespoken, we strive to eat our own dog food. And to that end, we had a recent hackathon to build our own Alexa skills. I decided to build a fun game, based on the show “The Price Is Right” – it shows you an item and asks you to guess how much it costs. I’m submitting it now – I hope you enjoy it once it is launched!

As part of the core development team, I was able to use our manual and automated testing tools to create my skill quickly, rapidly iterating through develop, test and debug cycles. Now I’m going to show you how I did it, and why I think it gave me an unfair advantage in creating a great skill, fast.

The first tool I used was our speak command in our CLI – getting started with it requires just this command:

npm install bespoken-tools -g

Once setup, I could run different utterances directly against my skill, saying things like:

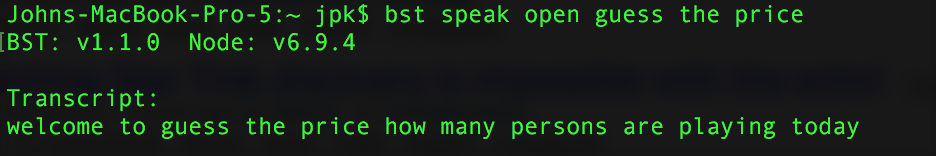

bst speak open guess the price

bst speak two players

With each command, I get back the actual reply from Alexa – including the speech-to-text transcription, any stream URLs, as well as information about cards:

The command uses the actual Alexa Voice Service (AVS), so I did need to register a device with Amazon to use it. The command-line tool guides you through this process, so it’s easy. Once completed, the great thing is I am working with the real Alexa!

I ran through whole sequences of interaction (I kept them in a simple shell script) – to quickly see if my skill was working correctly. It saved me a ton of time! You can read more about the speak command here.

Once I had the basics of my skill working, I wanted to go beyond just manual testing – I actually wanted to add some automated tests.

For this I turned to our Virtual Alexa project – this is an emulator that mimics the behavior of Alexa. It generates JSON based on the utterances I send to it. Take a look at a sample test here:

| it("Launches successfully", async function() { | |

| const bvd = require("virtual-alexa"); | |

| const alexa = bvd.VirtualAlexa.Builder() | |

| .handler("index.handler") // Lambda function file and name | |

| .intentSchemaFile("./speechAssets/IntentSchema.json") | |

| .sampleUtterancesFile("./speechAssets/SampleUtterances.txt") | |

| .create(); | |

| let reply = await alexa.launch(); | |

| assert.include(reply.response.outputSpeech.ssml, "Welcome to guess the price"); | |

| // Skill asks for number of players | |

| reply = await alexa.utter("2"); | |

| assert.include(reply.response.outputSpeech.ssml, "what is your name"); | |

| assert.include(reply.response.outputSpeech.ssml, "contestant one"); | |

| // Skill asks for name of player one | |

| reply = await alexa.utter("john"); | |

| assert.include(reply.response.outputSpeech.ssml, "what is your name"); | |

| assert.include(reply.response.outputSpeech.ssml, "Contestant 2"); | |

| // Skill asks for name of player two | |

| reply = await alexa.utter("juan"); | |

| assert.include(reply.response.outputSpeech.ssml, "let's start the game"); | |

| assert.include(reply.response.outputSpeech.ssml, "Guess the price"); | |

| // Skill asks the first player to guess the price of an item | |

| reply = await alexa.utter("200 dollars"); | |

| assert.include(reply.response.outputSpeech.ssml, "the actual price was"); | |

| }); |

We did a previous example with virtual alexa that use promises – in this case, we are actually using async/await. It makes the code even cleaner and more readable. Since I am working with AWS Lambda (which only supports up to Node 6, and so does not yet include native async/await support), I used babel to transpile my code. You can see the whole project here.

These unit tests are easy to write, and gave me a great deal of confidence in my code and my ability to refactor and change it as I go forward.

I hope that you find these manual and automated testing tools as useful and easy-to-use as I did. Talk to me on Gitter or in the Alexa slack channel – @jperata. Or just add a comment below. Love to hear your feedback and questions!

[…] How I Tested and Debugged My Cool New Alexa Skill […]

[…] How I Tested and Debugged My Cool New Alexa Skill […]